There is plenty of enthusiasm and quite a bit of hype in various quarters about upcoming 5G; but will it be worthwhile for everyone?

The outlook for 5G is promising because growing mobile broadband data demand will saturate LTE capacity from around 2020. Only 5G can access the higher frequencies above 6GHz, including mmWave bands where spectrum bandwidth is most abundant. 5G's great potential in various additional services including IoT is predicated on network deployments that will only be made economically viable by large-scale eMBB demand.

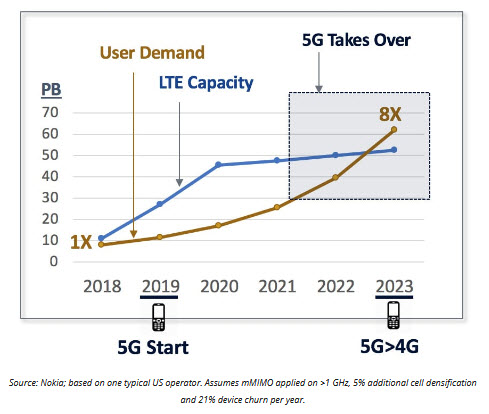

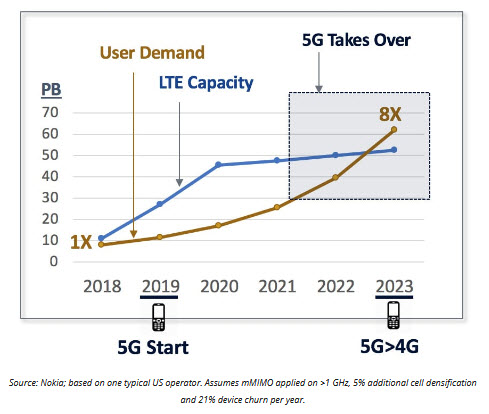

5G needed as growing MBB user demand saturates LTE capacity

Previous introductions of next-generation standards have been broadly beneficial in the long term, but the shift to 3G WCDMA was quite problematic for several years. There was insufficient initial demand for what 3G inadequately provided, and spectrum costs were too high. The 4G transition went rather better from the outset because MBB demand was already booming or congested by then and because LTE was desperately needed to accommodate growth.

As with previous upgrades, key factors that will drive or inhibit the transition to upcoming 5G are in consumer demand, network capacity including spectrum supply, and devices.

Disrupters need demands to satisfy and solutions that work

Introduction of a next generation of cellular technology standard brings major changes. For vendors, it can require significant technology and product development investments. In 5G, a lot of these are already sunk costs.

Imminently-expected orders for 5G network and device products will buoy markets that had been flat-lining following a decade of rapid growth in LTE buildouts and smartphone sales. It also creates opportunities for challengers to displace incumbent suppliers. But 5G adoption crucially depends on two other constituencies -mobile operators and users, who, for many years, will overwhelmingly be consumers.

The switch to 2G from around 1992 was a boon for nearly everyone. With several different 1G standards there were limited economies of scale in development and production. Digital 2G technologies in fewer rival standards delivered massive efficiency and quality benefits. At first, network services were almost entirely for voice, and then also included text messaging. Subsequent generational upgrades were largely to improve data performance and capacity.

The old data problem - not enough demand

From 2001, 3G, based on 3GPP Release 99 WCDMA, promised data rates faster than 2G could deliver at that time, but there was little initial demand for either. This was a bad problem for technology product and services vendors. Even flat-rate, all-you-can-eat rate plans did little to generate demand. Excluding text messaging, data ARPUs were miniscule at no more than low single-digit percentages (of total ARPUs) outside of Japan and Korea, where data was predominantly on other standards including CDMA2000. Devices then did not have the applications, application-processing speeds, displays and battery-performance required to exploit faster links. Around 2004, consumers preferred thin phones like the 2G Motorola RAZR than clunky beasts like the 3G Samsung, as shown below.

3G vs 2G: what was revolutionary and cool in 2004?

The Samsung was initially touted as a premium device, but its video telephony performed poorly; and one was restricted to using this feature with the few others who also had the same device. It was soon repositioned to heavy users of voice calling, for example, by 3 in the UK which had copious network capacity with its new spectrum. These people were lured with low per-minute charges and large subsidies to cover the high wholesale cost of these devices.

European operators were also hampered by paying a hefty $150 billion for 3G spectrum in the early 2000s. Later 3G adopter AT&T, in the US, was able to economically refarm some of its existing spectrum.

By the time demand for faster links was substantial in the mid-2000s, GSM's EDGE technology was close to matching WCDMA's maximum speed of 384 kbps. It was the market for improved 3G with multi-megabit-per-second HSDPA data cards and dongles plugged into PCs from around 2006 that really stoked mobile data demand.

The new data problem - too much demand

This was a nice problem for suppliers of mobile technology products and operator services to fix. Further improvements to HSDPA including HSPA+ at up to 42 Mbps were widely implemented. Introduction of LTE was also timely in satisfying consumer and operator needs for increased end-user speeds and network capacity.

Mobile broadband was getting into its stride when LTE services were first launched at yearend 2009. Around 15% of 3G UMTS shipments then were of non-phone MBB devices. The smartphone revolution was already well underway with the 3G iPhone and the first Android phone introduced in 2008. By 2013, smartphones accounted for more than half of handset sales. LTE was included in more than 50% of cellular modem chips shipped by yearend 2015.

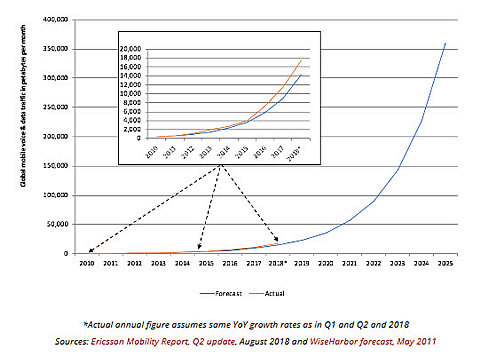

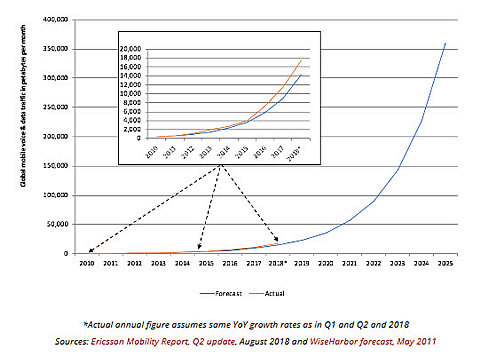

Data demand grew rapidly and is expected continue doing so for many years. With my optimism for fast and sustained mobile data growth, in 2011, I published a 15-year forecast for 1,000x mobile traffic growth globally from 2010 to 2025. My projected 58% compound-annual growth rate is slightly below actual figures tracked in Ericsson's Mobility report between 2010 and 2018. Therefore, with continuation of the trend since 2010, my 1,000x forecast will be exceeded by 2025.

Half way to 1,000x mobile traffic growth over 15 years to 2025

Confounding compounding

Halfway through the 15-year forecasting period, traffic demand is around halfway towards 1,000x growth, as indicated by my chart's title. That is based on the same, 58% annual, growth rate compounding over the entire forecasting period. However, putting it another way, 2018 traffic is less than 5% of what it will be in 2025. That also dauntingly means there needs to be twenty times more network capacity than the total of everything built so far. By measuring growth in percentages, rather than in the number of additional bytes, we are perceiving the world in a rather different way to that depicted graphically above.

The higher the annual percentage growth rate the more significant this difference in perspectives is-particularly over many years. Einstein called compounding effects in financial interest the eighth wonder of the world. Compounding effects are much more severe in mobile data because growth rates such as 58% are so much higher than interest rates in most financial markets.

Back to the engineering and physics

There are various ways that data capacity can be increased to accommodate growth in demand: these techniques are already being implemented in LTE. Three technologies: 4×4 MIMO, 256 QAM, and 3+ carrier aggregation (for 60 MHz bandwidth or more) are used to achieve Gigabit LTE. 3GPP specifications have defined aggregating up to 32 carriers for LTE. Qualcomm has introduced its X24 modem, which combines 20 spatial streams with 7x CA, 256 QAM, and 4×4 MIMO to achieve 2 Gbps. Operators are also deploying massive MIMO antenna configurations with up to 128 antenna elements (64 for transmit and 64 for receive).

Network densification is being achieved through cell sectorization (e.g., increasing from three, to six, and even to nine sectored cells), with cell splitting (e.g., into three or more cells) and with introduction of small cells. The latter can be costly for site acquisition and backhaul. Tens of thousands of small cells are being deployed in the US.

Nokia's capacity forecast in the first chart assumed some use of massive MIMO as well as significant cell densification with LTE.

But cellular standards up to and including LTE are only implemented in frequencies up to 5GHz, including some use of shared-spectrum and unlicensed bands in the higher frequencies. Despite these measures, LTE capacity will typically saturate for operators from around 2020, as indicated by Nokia's chart.

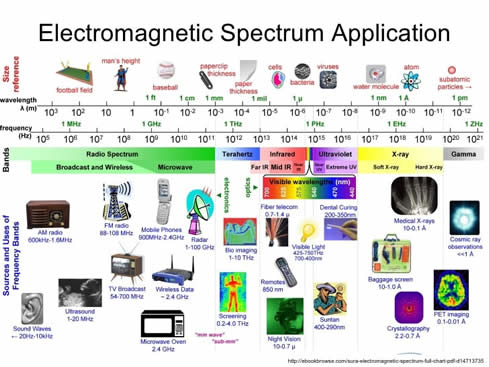

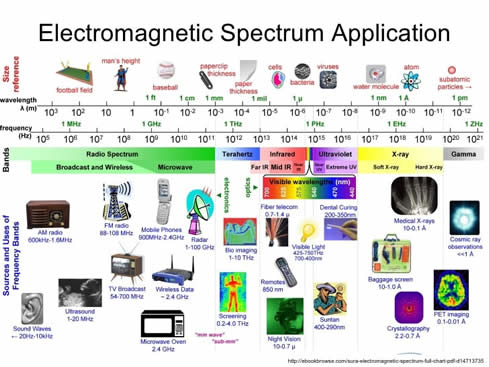

Thankfully, capacity in the electromagnetic spectrum increases at higher frequencies. That might not be immediately apparent to everyone because we invariably also depict the above non-linearly, with a logarithmic scale, such as in the following chart. This is how much more spectrum above 6GHz, than below that frequency, can be made available to accommodate the large amounts of additional data traffic.

Channel bandwidths proportionately wider and higher frequencies

While 5G, like previous cellular generations, will also depend on frequencies across the gamut from hundreds of megahertz upward for coverage and control signalling, technical development and standardization work for frequencies above 6GHz will be focused on 5G. Spectrum above 6GHz, and particularly mmWave (strictly-speaking above 30 GHz) presents additional engineering challenges. Propagation limitations and higher penetration loss will be mitigated by massive MIMO, beam steering, beam tracking, dual connectivity, carrier aggregation, and small-cell architectures with self-backhauling.

5G will, therefore, be the only way to access the large swaths of additional bandwidth available at those higher frequencies. According to Allnet, the top-five US cellular licensees held a total last year of only 697 MHz of spectrum at frequencies up to around 2.5 GHz. AT&T and Verizon each acquired hundreds of megahertz of spectrum at 28GHz or 39GHz in the last year. According to a recent report by 5G Americas, a total of between 5.5GHz to potentially 9.35GHz of spectrum could become available in the US.

What about devices more recently?

Whereas device issues including technical performance for the modem, applications processor, display and battery, as well as form factor, weight and cost all hindered the transition from 2G to 3G, the transition from 3G to LTE and 4G was stronger and faster. LTE devices soon became available with very similar form factors and battery performance to 3G devices.

With significantly higher device costs due to large and high-resolution multi-touch displays and in applications processors, additional modem and RF costs for LTE were much smaller as a percentage of total device ASPs in smartphones than they were for the initial addition of WCDMA to feature phones.

Issues including fallout from the economic crisis in 2008 and the fact that HSPA+'s theoretical maximum performance was comparable to LTE initially seemed to have little impact. In 2009 and prior to the introduction of LTE, 3G data network congestion had been awful in various places including New York and San Francisco. Real-world network and device performance significantly improved with LTE because it was largely deployed on large blocks of new spectrum at low frequencies providing lots of new capacity and excellent coverage straight away.

There will also be strong demand for the transition from 4G to 5G due to growth in mobile broadband. One potential hurdle is that devices are no longer so commonly or heavily subsidized by mobile operators. 5G radios need complex RF electronics including new antennas. Increased spectral efficiency with the new standard is an economic incentive for operators. Network capacity is a direct concern for operators rather than users. How much more will end-users be willing to pay? When consumers start using 5G, that will relieve the 4G networks and provide improved performance for those who only have legacy 4G devices. A killer app that can exploit the unique capabilities of 5G eMMB, such as UHD or XR, would be very helpful in stimulating user demand for upgrades. Industrial applications and IoT will come later and so economic viability for 5G will stand or fall based on eMBB.

Checkmarks for demand, supply and devices

5G uptake will be strong because it is the only way to satisfy an expected twenty-fold growth in demand for mobile broadband data between 2018 and 2025. Capacity improvements to LTE networks with enhanced technologies and cell densification will be saturating from 2020 for typical carriers in the US and elsewhere. Frequencies above 6GHz will provide at least an order of magnitude more spectrum for 5G than is available below that threshold for all cellular standards. The need for new devices should not be a major impediment to 5G migration, particularly if they can clearly offer significant benefits over 4G and if operators are willing to absorb some of the additional costs.